◎AI

AI Video Depth Estimation

CLI Tool

CLI tool that generates depth-map videos from any footage using transformer-based monocular depth estimation, with grayscale + heatmap export modes.

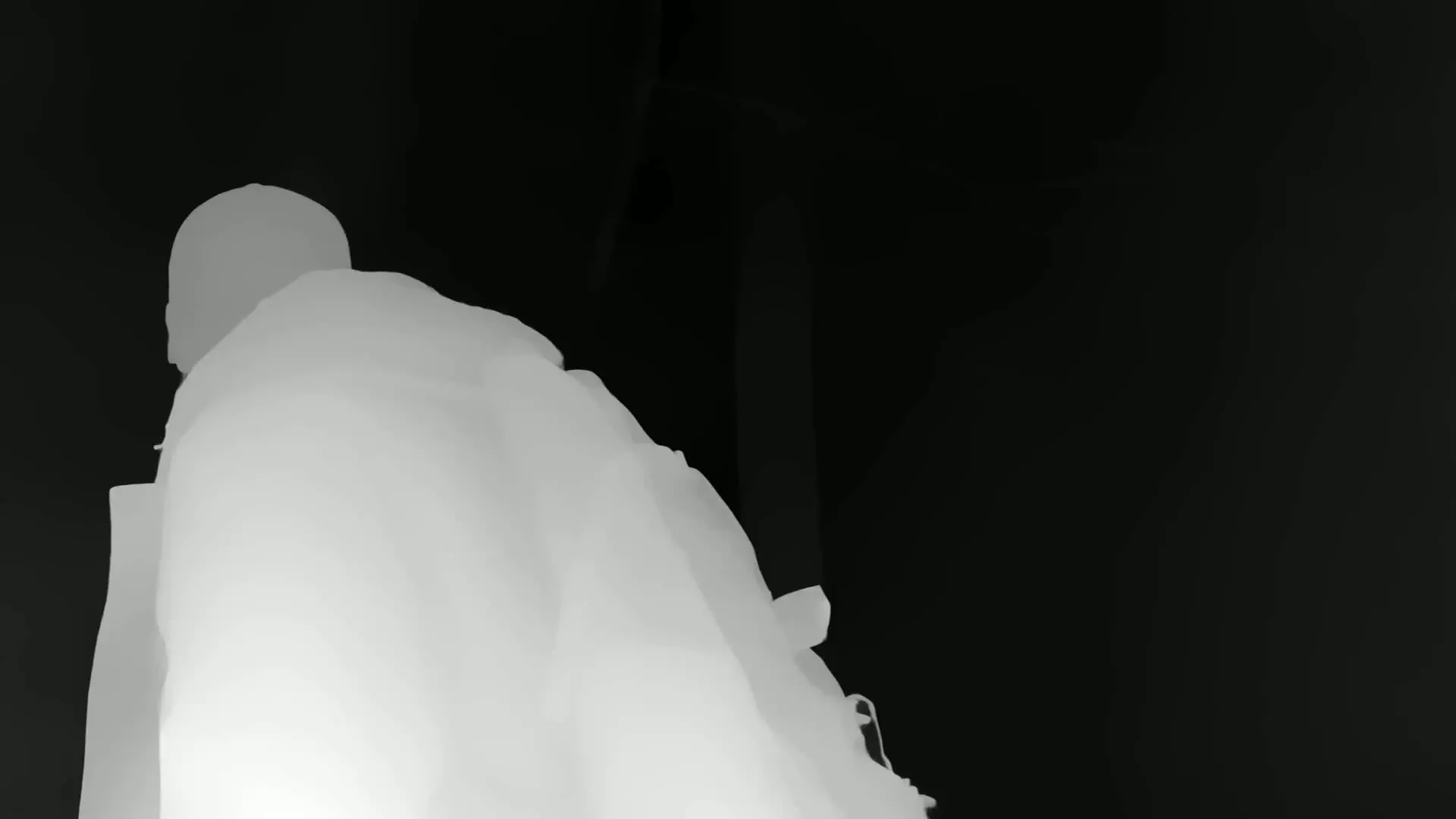

Apple Depth Pro Map

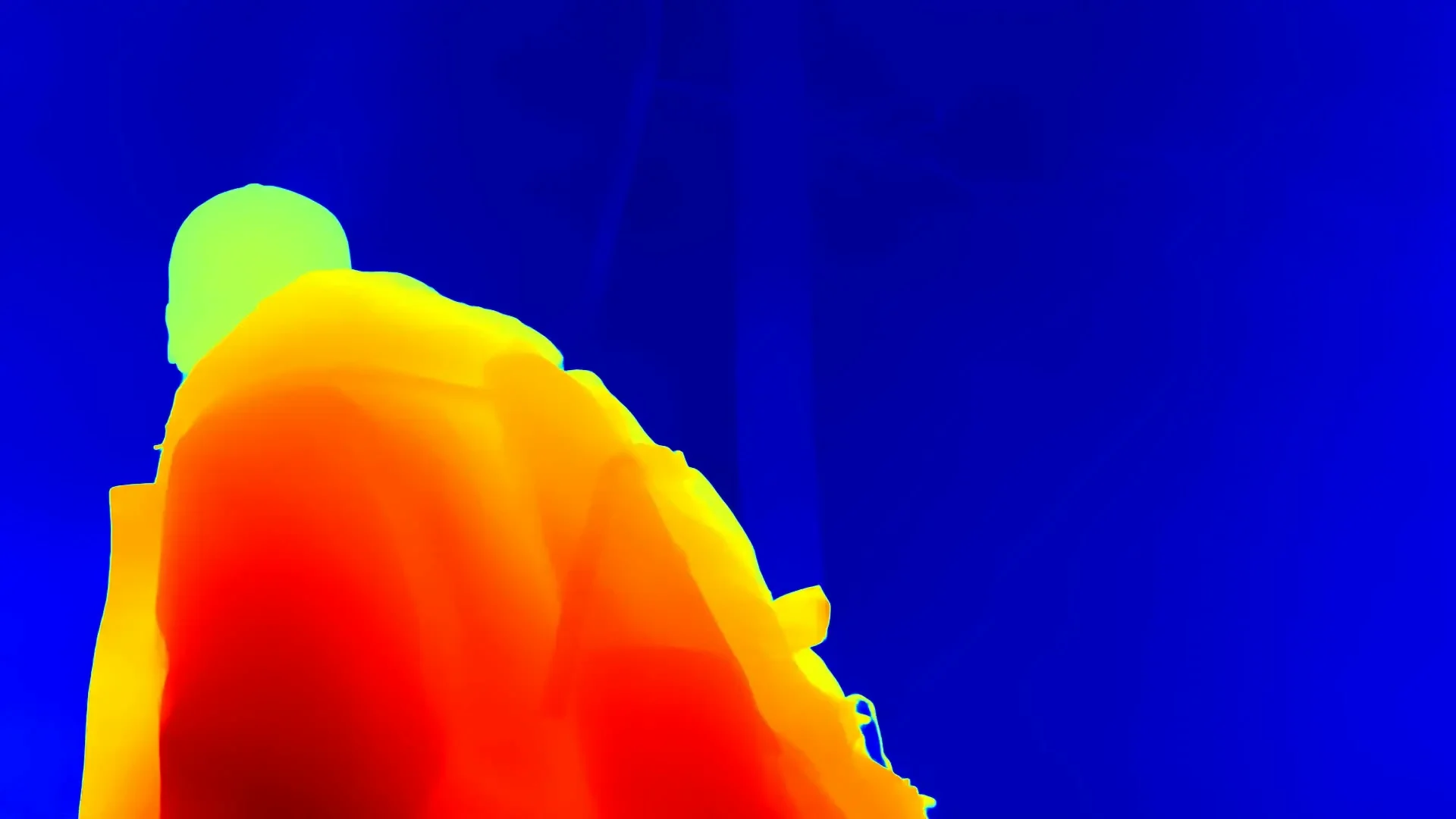

Spectral Heatmap - Apple Depth Pro

Command-Line Interface + Export Settings

A CLI wrapper lets you run the tool from terminal with a video input, model size selection, and output styling (grayscale vs heatmap). It also auto-creates an exports/ folder and generates a smart default filename.

1

def parse_args():2

parser = argparse.ArgumentParser(3

description="AI Video Depth Estimation using Depth Anything V2"4

)5

parser.add_argument("input", type=str, help="Path to input video file")6

parser.add_argument("--model", type=str, default=DEFAULT_MODEL,7

choices=list(MODEL_CONFIGS.keys()))8

parser.add_argument("--output", type=str, help="Path to output video file")9

10

group = parser.add_mutually_exclusive_group()11

group.add_argument("--viz", action="store_true",12

help="Output colorized depth heatmap (Inferno)")13

group.add_argument("--grayscale", action="store_true", default=True,14

help="Output raw grayscale depth (default)")15

16

parser.add_argument("--spectral", action="store_true",17

help="Use Spectral-like colormap (requires --viz)")18

return parser.parse_args()

A user interface for a video tools toolbox displaying options for depth estimation, including a selection for an input video file and model size.

Device Selection + Model Loading (Hugging Face Transformers)

The script automatically chooses CUDA if available, then loads both:

- an AutoImageProcessor for pre-processing frames

- an AutoModelForDepthEstimation for inference

1

device = "cuda" if torch.cuda.is_available() else "cpu"2

print(f"Using device: {device}")3

4

processor = AutoImageProcessor.from_pretrained(model_id, trust_remote_code=True)5

model = AutoModelForDepthEstimation.from_pretrained(model_id, trust_remote_code=True)6

7

model.to(device)8

model.eval()

Video Reader/Writer Pipeline (OpenCV)

The tool opens the source video with OpenCV, reads its properties, then creates an output writer that matches the input resolution + FPS.

1

cap = cv2.VideoCapture(str(input_path))2

w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))3

h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))4

fps = cap.get(cv2.CAP_PROP_FPS)5

total_frames = int(cap.get(cv2.CAP_PROP_FRAME_COUNT))6

7

fourcc = cv2.VideoWriter_fourcc(*"mp4v")8

out = cv2.VideoWriter(str(output_path), fourcc, fps, (w, h))

Per-Frame Inference (Frame to Depth)

Each frame is:

- read from OpenCV (BGR)

- converted to RGB and wrapped as a PIL Image

- processed into tensors for the model

- inferred with torch.no_grad() for speed

1

ret, frame = cap.read()2

frame_rgb = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)3

image = Image.fromarray(frame_rgb)4

5

inputs = processor(images=image, return_tensors="pt")6

inputs = {k: v.to(device) for k, v in inputs.items()}7

8

with torch.no_grad():9

outputs = model(**inputs)10

predicted_depth = outputs.predicted_depth

Depth Resizing + Normalization

The depth output is resized back to the original frame dimensions using bicubic interpolation, then normalized into an 8-bit range (0–255) for video encoding.

1

prediction = torch.nn.functional.interpolate(2

predicted_depth.unsqueeze(1),3

size=(h, w),4

mode="bicubic",5

align_corners=False,6

)7

8

depth_output = prediction.squeeze().cpu().numpy()9

10

# Normalize to 0–255 for display/video output11

d_min, d_max = depth_output.min(), depth_output.max()12

norm = (depth_output - d_min) / (d_max - d_min + 1e-6)13

depth_uint8 = (norm * 255).astype(np.uint8)

Interested in this experiment?